Article Reviewed by Gordon Johnson, Subzero Senior CFD Manager

Data centers are not a single category. Understanding the distinctions between micro, edge, and hyperscale deployments is essential for matching infrastructure to workload. Getting that match wrong is expensive.

The term “data center” covers an increasingly wide range of facilities, from a compact self-contained enclosure on a factory floor to a campus drawing hundreds of megawatts from the grid. Micro data centers, edge data centers, and hyperscale data centers each serve a different operational purpose. The differences come down to three things: scale, proximity to the workload, and what the facility is designed to do.

What Is a Micro Data Center?

A micro data center is a compact, self-contained computing deployment placed as close as possible to where the data is being generated or processed. The form factor is small, often a few racks or a single enclosed unit, and the location is typically a remote site, factory floor, retail location, cell tower, or branch office.

The core use case is local processing where sending data back to a centralized facility introduces too much latency or requires more bandwidth than the site can support. A manufacturing line running real-time AI inference, a remote oil and gas facility, a retail environment running edge analytics are all candidates for a micro data center. The compute stays on-site because it has to.

Thermal management inside micro deployments presents specific challenges. Compact enclosures concentrate heat in small spaces without the airflow advantages of a larger facility. Containment and cooling solutions for micro deployments require precise engineering relative to the footprint.

What Is an Edge Data Center?

Edge data centers are smaller, distributed facilities positioned geographically closer to end users, devices, and data sources than a centralized cloud or enterprise campus. The purpose is to reduce latency and ease bandwidth pressure on core infrastructure.

Where a micro data center serves a single site or workload, an edge data center can support metro-scale or enterprise-scale demand. Content delivery, 5G network infrastructure, real-time analytics, smart manufacturing, and IoT aggregation are common use cases. It’s important to note that edge doesn’t mean small by default. Some edge facilities carry meaningful power loads. The defining characteristic is proximity and distribution, not necessarily size.

The distinction from hyperscale is intent. Edge facilities handle the latency-sensitive portion of a workload, not the full compute picture. A platform might run its core processing in a hyperscale facility but distribute caching or inference to edge sites across multiple metros. The two architectures work together more often than they compete.

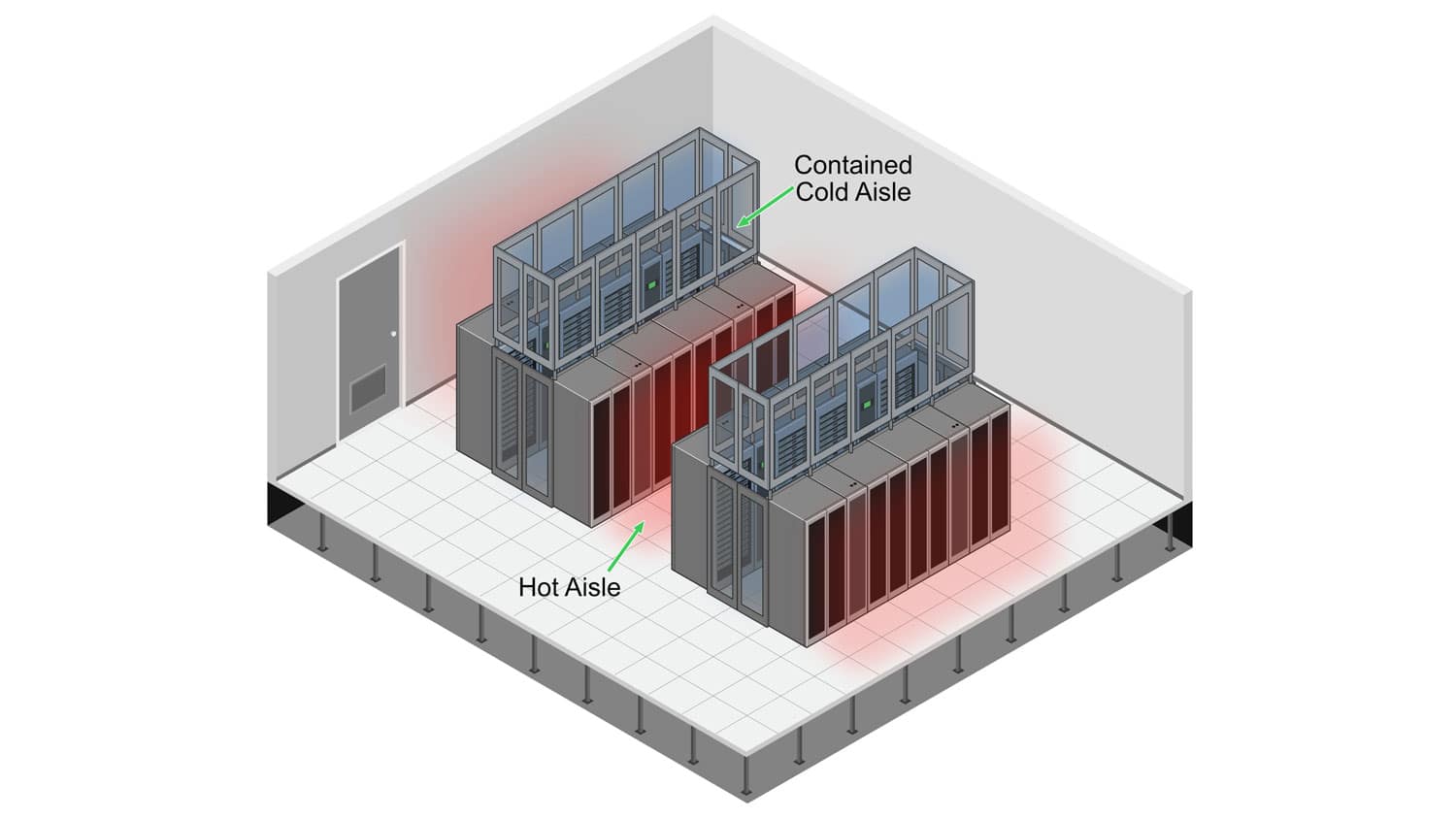

Cooling and containment design for edge environments reflects the constraints of distributed deployment. Sites are spread out, maintenance access varies, and space is typically limited. Hot and cold aisle containment strategies have to account for those site-specific realities.

What Is a Hyperscale Data Center?

Hyperscale data centers are large-scale campus facilities built for massive compute and storage growth. The scale is significant. The U.S. Department of Energy has documented hyperscale connection requests of 300 to 1,000 megawatts or more. NREL describes the range as roughly 100 megawatts to a few gigawatts. These are utility-level infrastructure commitments, not incremental capacity additions.

The major cloud providers operate hyperscale facilities to support their global platforms. AI training workloads have accelerated this further. Rack densities, chips, servers, power consumption, and heat levels continue to increase with AI demand, pushing hyperscale design toward denser, more thermally intensive configurations.

Efficiency at this scale is a financial priority. Data center cooling represents up to 50% of overall energy consumption. A meaningful improvement in PUE across a 500 MW campus translates to tens of millions of dollars annually. Hot and cold aisle containment, airflow management, and Computational Fluid Dynamics (CFD) modeling are direct drivers of operational cost at hyperscale.

How the Three Compare

| Micro Data Center | Edge Data Center | Hyperscale Data Center | |

| Footprint | Single enclosure to a few racks | Small to mid-size distributed facility | Campus-scale, 100 MW to several GW |

| Typical Power | Sub-100 kW | Generally sub-20 MW | 100 MW to 1,000 MW+ |

| Proximity | On-site, at the point of use | Metro or regional | Centralized, purpose-sited |

| Primary Advantage | Lowest latency, smallest footprint | Distributed low-latency compute at scale | Massive capacity, economies of scale |

| Common Workloads | Industrial IoT, local inference, remote ops | Streaming, 5G, real-time analytics | AI training, cloud platforms, large-scale storage |

| Cooling Complexity | High density in a small space | Distributed site constraints | Utility-level optimization |

Why These Differences Matter

Latency. Regardless of architecture, the physics don’t change. A micro data center delivers single-digit millisecond response times because the compute is on-site. Edge brings that response to the regional level. Hyperscale facilities, however optimized, cannot replicate what proximity delivers for real-time applications.

Power and cooling. Hyperscale campuses require direct engagement with utilities and sophisticated grid management. Edge and micro deployments often lack dedicated utility infrastructure, which means the enclosure design and thermal management carry more weight. There’s less room for error.

Scalability. Hyperscale is the right choice for organizations that need to scale compute in large increments quickly. Edge and micro are the right choice for organizations deploying across many distributed sites.

Workload fit. As Gordon Johnson, Subzero’s Senior CFD Manager, has noted: “It’s more important to focus on the actual workload for the data center that we’re designing or managing.” One design does not fit all.

Choosing the Right Architecture

The decision comes down to a straightforward set of questions. Where does the workload need to run? How sensitive is it to latency? What does growth look like over the next five years?

Micro data centers are the right fit when local compute is required at a site where centralized infrastructure isn’t viable, and latency requirements are strict. Edge data centers fit when the need is distributed compute closer to users or devices, supporting real-time applications at regional scale. Hyperscale is the answer when massive centralized capacity is the requirement, whether for cloud infrastructure, AI training, or large-scale data storage.

The simplest rule: identify the smallest footprint that still meets the latency, power, and growth requirements for the actual workload.

Containment and Thermal Management Across All Three

Regardless of scale, thermal management is where infrastructure performance is won or lost. Data center cooling accounts for up to 50% of overall energy consumption in many facilities. Hot and cold aisle containment reduces that burden by fully separating cold supply air from hot equipment exhaust before it reaches IT intake, creating uniform, predictable supply temperatures and a warmer return to the cooling units.

At hyperscale, containment is a core efficiency driver that affects operational cost directly. At edge, it manages heat in constrained spaces with limited margin for thermal variance. At micro scale, it prevents hot spots in enclosures where even a few degrees of temperature variance can reduce equipment life and uptime.

The design approach changes with the scale and the site. The underlying principle does not.

Subzero Engineering works across all three deployment types, from micro enclosure thermal design to large-scale containment systems and CFD consulting for hyperscale campuses. To discuss your facility’s requirements, schedule a consultation with our engineering team.